Method

Overview

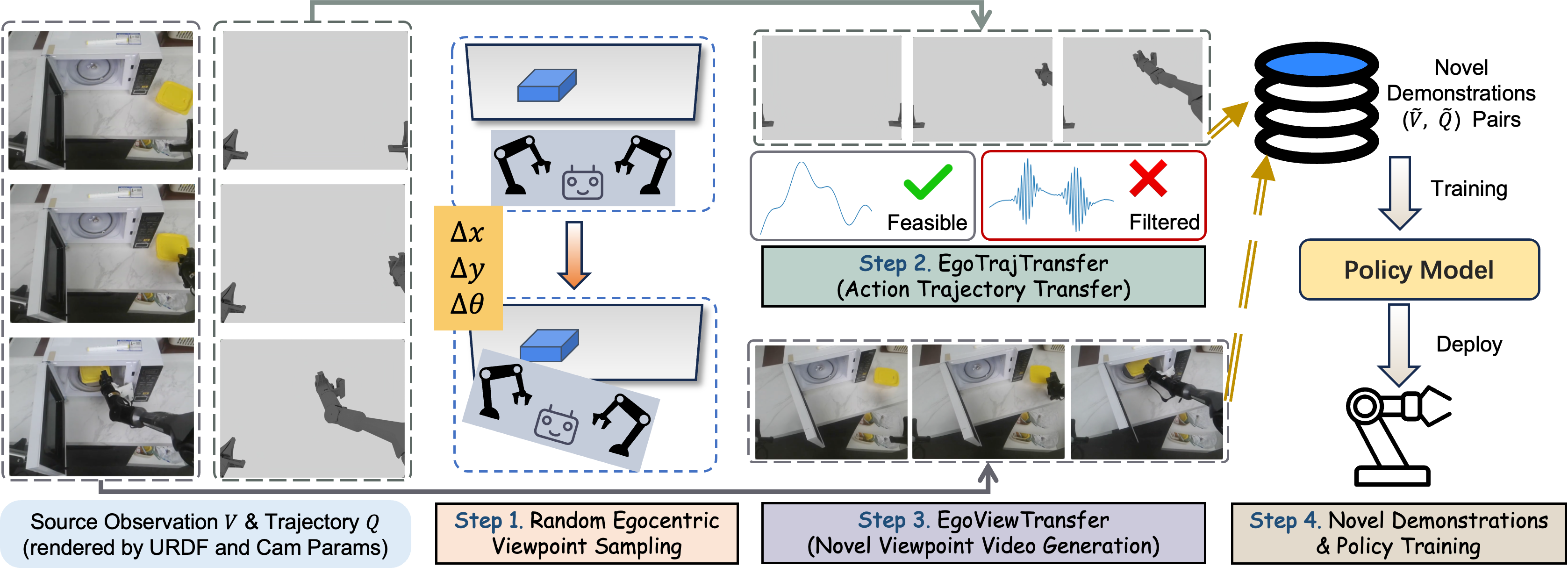

Overview of EgoDemoGen. Given source demonstrations from a standard egocentric viewpoint, we generate novel demonstrations through four steps: (1) sampling novel egocentric viewpoints via robot base motion \((\Delta x, \Delta y, \Delta \theta)\); (2) EgoTrajTransfer produces kinematically feasible action trajectories \(\tilde{Q}\) adapted to the novel egocentric coordinate frame, filtering out infeasible viewpoints via inverse kinematics; (3) EgoViewTransfer synthesizes photorealistic observation videos \(\tilde{V}\) from the novel egocentric viewpoint, depicting the transferred robot motion; (4) the generated demonstrations are combined with original data to train policies that generalize across egocentric viewpoints.

EgoTrajTransfer

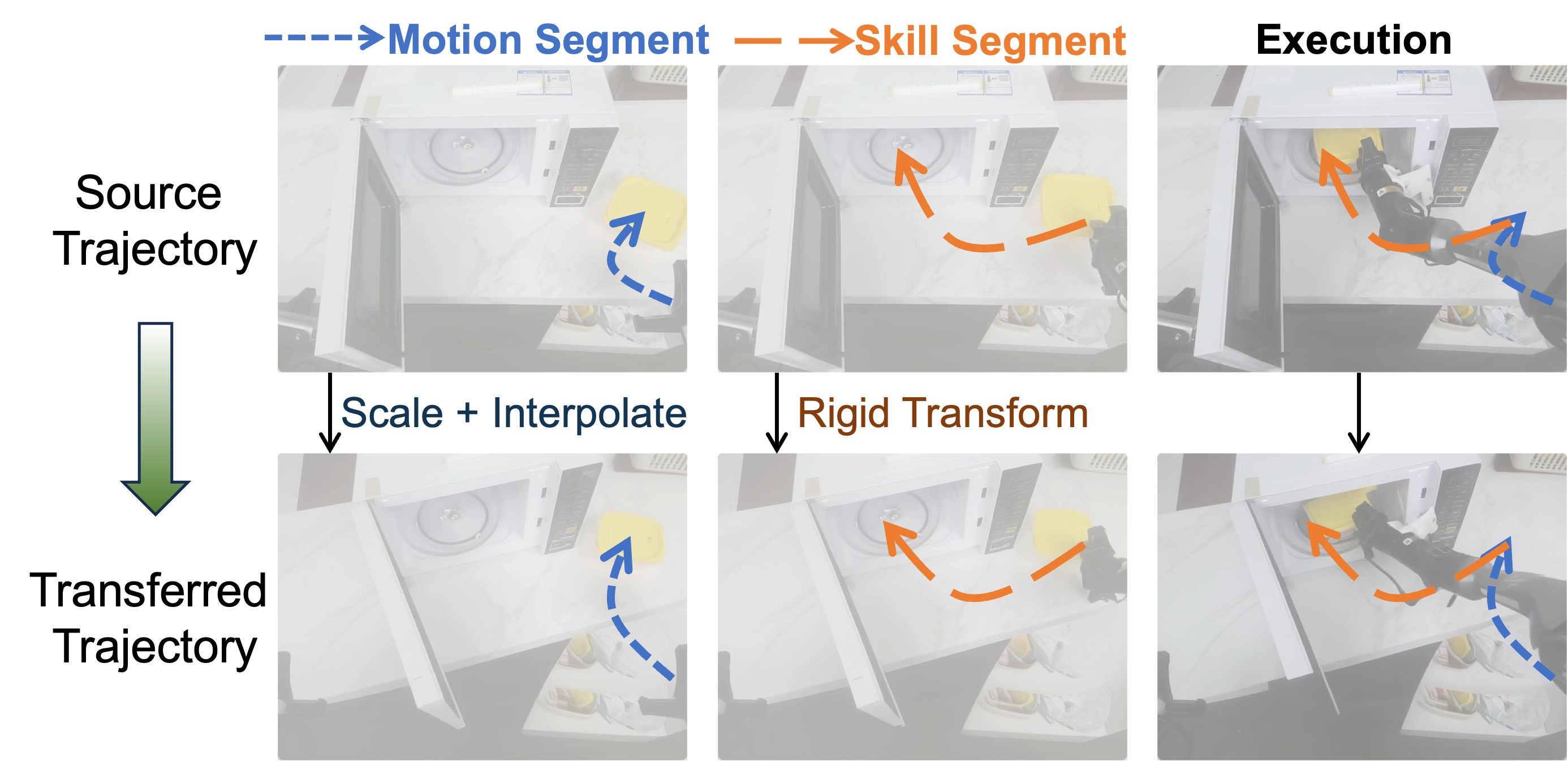

EgoTrajTransfer pipeline. Top: source trajectory segmented into motion segments (free-space movement) and skill segments (contact-rich manipulation) by gripper states. Bottom: transferred trajectory using position scaling and orientation interpolation for motion segments, rigid transformation for skill segments.

EgoViewTransfer

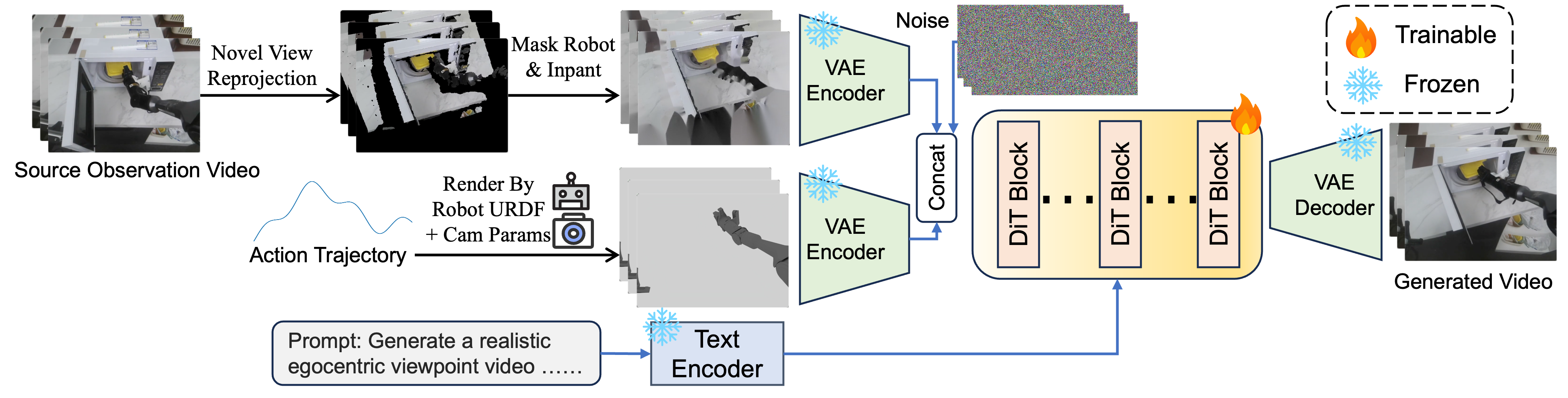

EgoViewTransfer pipeline. We synthesize novel-viewpoint observations through three stages. First, scene video preparation: reproject the original video to the novel viewpoint, mask the robot region, and inpaint to obtain clean background. Second, robot motion rendering: render robot motion from the transferred trajectory using URDF and camera parameters. Third, conditional video generation: fuse both videos via DiT-based diffusion model with dual-video conditioning.